(404) Safety Not Found

Vanessa GathechaThis blog focuses mostly on social media platforms due to their scale and influence, however, the issues discussed extend beyond social media platforms and are present across the wider digital ecosystem.

Digital spaces cover a range of apps, software and websites, becoming an integral part of our lives, from connecting people, building communities, accessing the news, information acquisition and dissemination, organizing and advocacy. The evolution of commonly used digital spaces goes as far back as 2004 with the launch of Facebook, while Reddit, Twitter, Instagram, Snapchat, Tiktok and others followed, as Meta’s Facebook currently leads with over 2 billion daily active users. Over half of the world’s population uses one of the platforms with this growth attributed to the invention of the smartphone, as affordable options of handheld devices have increased accessibility to social media platforms through mobile apps.

Vulnerable communities in particular have relied on digital platforms as spaces where they can express themselves and their identities, organize collectively, and advocate for their rights without fear of repression or persecution. These digital environments play a meaningful role in enabling participation, civic engagement, and access to information across borders. However, the conditions that once made digital spaces empowering have gradually deteriorated, undermining the safety and utilitarian value of these platforms, limiting their ability to serve as open and protective environments for marginalized groups.

This blog focuses mostly on social media platforms due to their scale and influence, however, the issues discussed extend beyond social media platforms and are present across the wider digital ecosystem.

The evolution of social media

Many of us may remember major social media moments that span from political events to pop culture moments that have shaped our digital lives. From former President Obama’s election in 2009, the Arab Spring, #MeToo movement, Black Lives Matter – The largest protest movement in American history, Tiktok blowing up as a result of people being cooped up indoors during the 2020 novel coronavirus pandemic, users animatedly sharing their passion for popular TV shows such as Game of Thrones, Squid Games, Black Panther and even extreme events such as the countdown of Oceangate’s Titan submersible implosion in 2023.

Recently, young people especially, have taken to social media to organize and protest against bad governance, human rights violations and corruption in their countries such as; Bangladesh with the #JulyRevolution, Kenya with #RejectFinanceBill protests in 2024. Elsewhere we have seen politicians such as NYC Mayor, Zohran Mamdani, build an electoral campaign on social media with positive spillover effects offline; and US President Trump utilizing platforms such as Twitter and TruthSocial for major announcements, making digital spaces an alternative to test the overton window, a concept that describes the range of ideas and policies that are politically acceptable to the mainstream at any given time (Vortex); in both local and foreign policy. These methods of political and civic participation have increased the need for deliberative technologies, as social media platforms are not entirely designed for civic engagement, a mission the Taiwan government has successfully executed, strengthening its digital democracy.

As digital access expands worldwide, this has accelerated growth in various industries, such as advertising, where individual data is used to generate targeted ads. Further, it has led to the rise of new careers such as Influencer marketing, where individuals with larger online communities leverage their reach to increase visibility and consumption of various goods and services. Social commerce, a recent evolution on social media platforms now allows brands to interact directly with consumers, via in-app features such as Shopify and Tiktok shop, providing a more seamless shopping experience and a major contributor to the digital economy; the economic activity that results from billions of everyday online connections among people, businesses, devices, data, and processes. On the other hand, the constant stream of products and services appearing on our screens with every scroll has sparked concerns about consumerism. In response, counter-trends like deinfluencing are gaining traction as more people push back against excessive consumption.

Platforms (especially) in free fall

In the past years, social media platforms have come under scrutiny as ongoing research highlights the effects of digital spaces like social media on individuals, communities and even democratic processes.

2026 has been described as social media’s Big Tobacco Moment as tech giants Instagram and YouTube become central to landmark US legal battles over claims that their platforms are designed to be addictive and cause harm particularly to children. In a recent case, a jury found both companies liable for contributing to a young user’s mental health struggles, marking one of the first times courts have held platforms accountable for the effects of their design and not their content. The ruling is part of a broader wave of litigation with similar cases emerging and growing calls for regulation of features such as autoplay and infinite scroll. This moment builds on earlier warnings, including a testimony from a Facebook whistleblower before the US Senate, who argued that the platform’s design prioritised growth and profit at the expense of user safety, fuelling harm to children, deepening division, and undermining democratic processes.

Other digital platforms such as X, have been hit with huge fines for violating local regulations under the European Commission, Tiktok was also slapped with a €345M fine by the Irish data protection regulator for violation of children's privacy. And elsewhere, countries such as Turkey, Nigeria, France, Gabon and more have issued temporary, and even permanent bans in the case of China, to some of these social media platforms for posing risk to users, information integrity, national security and democratic processes.

Longitudinal studies have mapped out the positive and negative effects of digital spaces, including various forms of abuse, from doxxing, cyberbullying, online grooming, hacking, impersonation and with the uptick of GenAI, we now see new forms of abuse via deepfakes, video and image-based abuse, mis/dis/malinformation, sextortion and even Technology-Facilitated Gender Based Violence. Around the world, communities, educators, caregivers and civil society groups are calling for stronger policy and regulatory action on social media platforms. Their concerns centre on key design features like recommendation algorithms and engagement structures which operate as black boxes, arguing that when design decisions carry such wide social, economic, political and cultural impact, public oversight, plural governance and adaptive regulatory frameworks are necessary.

Government oversight vs Government overreach

Understandably, where user data is collected, processed and stored by private companies, and especially used to inform business decisions, social trends, cultural influence and as seen previously, having an impact on political sentiments as was the case with Cambridge Analytica in Kenya, governments have to step in to protect users and regulate with haste.

To enhance safety of its citizens while advocating for platform Duty of Care, some governments have acted fast as new or updated laws across the world continue to be proposed and/or passed such as Australia’s ban on social media for children under 16, France’s ban on under-15s from social media, the US’ Children's Online Privacy Protection Act preventing collection of data from children under 13 without parental consent and more countries such as Denmark, Britain, Greece, Malaysia, Norway and others are close to passing similar laws. In Sub-Saharan Africa and through the Maputo Protocol, the AU has also adopted a Resolution on the Protection of Women Against Digital Violence , however adoption of this and similar laws remain slow across the region.

Concerns about regulatory overreach also continue to shape global debates on the governing of digital spaces. Critics point to documented cases of expanded surveillance targeting dissidents, political actors, journalists, and activists, raising questions about how new regulatory powers might be used. Content moderation is another area of tension as governments in various regions have issued takedown requests, while platforms themselves have also restricted or suspended accounts, shadow-banned content and services. In some instances, decisions have reportedly been influenced by foreign government officials, as in the case of African Stream, a pan-African digital media organization operating primarily on social media platforms. Most of these governments argue issues of national security to justify regulation without due process, which is difficult to dispel as we hardly have access to any of this “classified information”, even for research purposes.

Optimized Systems, Marginalized People?

While this article has focused mainly on social media platforms, the rapid adoption of AI is also reshaping public service delivery. Governments are increasingly turning to agentic AI to streamline processes and reduce inefficiencies such as excessive paperwork, bureaucracy, and siloed operations, a shift that can free up staff, budgets, and resources in order to focus on essential services. However, improving efficiency should not treat safety, accountability, and rights as tradeoffs in digital spaces. Given that public sector AI relies heavily on data, its collection, storage and shared ontologies, they have real consequences for people’s lives therefore, sensitive information tied to identity, social protection, and access to services must be handled diligently. In some contexts, poorly governed data-sharing has already put individuals at risk, raising concerns about safety and fundamental rights such as the case of the ethnic Rohingya refugees’ data that was shared with the Myanmar government, the very country they had fled.

Recently, we have seen attempts to deal with complex government systems as abstract, feeding data into a single, opaque interoperable system, and treating empathy as a software vulnerability a la The Matrix. These systems are not simulations and decisions made through them affect real people’s livelihoods, access to services, and long-term wellbeing as is the case with US’ Department of Government Efficiency, DOGE. When systems become detached from human realities, empathy is lost, undermining the promise of developing AI or more broadly, technology for public good. Integrating digital intelligence into planning, management, and decision-making can strengthen public sector capacity and must be designed, deployed and used in ways that prioritize safety, protect rights, and remain responsive to the people they serve.

Cracking the code of digital safe spaces

Spaaij and Schulenkorf (2014) identified five dimensions of safe spaces: physical, psychological/affective, sociocultural, political, and experimental. In physical environments, safety is built into design, whereby architects, planners, and administrators assess physical, emotional, psychological, and financial risks to prevent harm. Safe spaces in the digital context are often treated as a downstream problem, addressed through patchwork moderation and compliance measures contortionist to the government of the day.

The severity with which we view the importance of safety in digital spaces led to Edgelands Institute launching in late 2025, a Global Research Sprint convening a group of interdisciplinary researchers and artists to recount and reimagine the concept of digital safe spaces. The format involved weekly anchoring sessions with experts from queer representation, data and user privacy, digital permanence, and the fluidity of identity to the significance of inclusive design, as well as weekly hands-on group research.

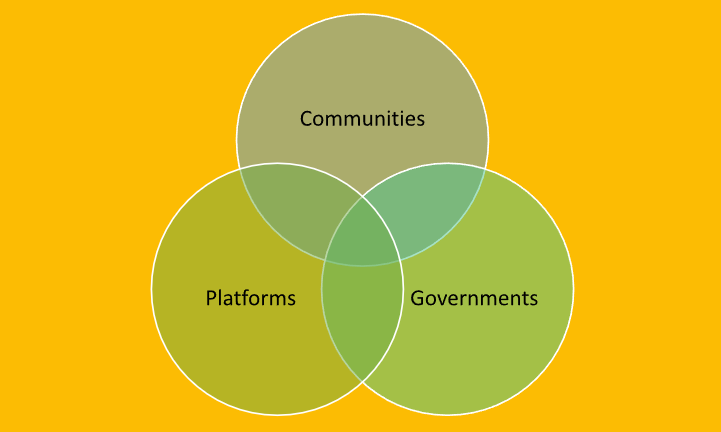

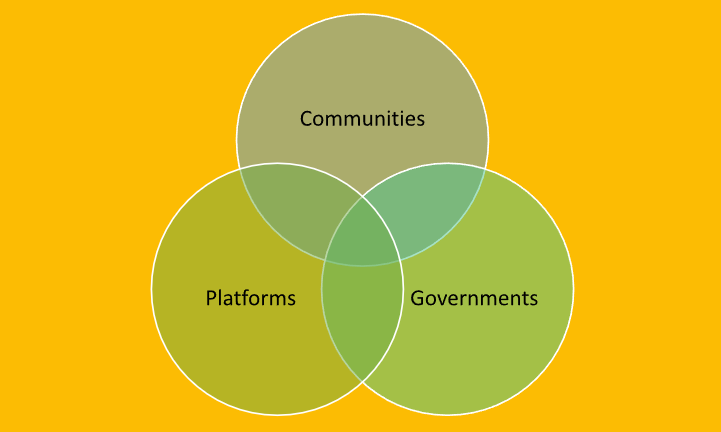

In its first co-creation exercise, this diverse group sought to identify what constitutes safety in day-to-day manoeuvring of digital platforms, by framing the question - What design and regulatory measures should digital platforms adopt to protect and empower communities? - This led us to identifying 3 core pillars in assessing digital safety; Platforms, Governments and Communities, hence, our goal was to condense this research at the intersection of these digital spaces’ stakeholders.

Platforms sit at the centre of online safety because they control the levers that shape user experience from privacy settings, recommendation algorithms, and content policies. Yet, these systems are often designed around profits, high engagement metrics, platform-centric growth models, and rarely, user protection. Design decisions often overlook local languages and cultural contexts, leaving support systems understaffed, non-multilingual, and ill-equipped to respond across regional, cultural and political contexts. In addition, platforms operate globally while laws remain fragmented and jurisdiction-specific, creating regulatory gaps and uneven enforcement. Terms and conditions also remain opaque, and lately, new AI features are being rolled out without adequate guardrails, with tools like xAI being used to generate non-consensual sexual images, an example of how unchecked systems can cause real-life harm. Data management and governance questions continue to arise as seen in reporting on expanded data collection practices by TikTok, in addition to other platforms hoovering our personal data to support algorithmic and business decisions.

As digital spaces have advanced, governments now view them as informal tools for communication and digital public infrastructure to boot. What began as small-scale online communities built in a college dormitory for students to connect, has become a set of global systems that shape public discourse, economic activity, political mobilisation and sociocultural exchange. Given their global scale and influence, governments are increasingly engaging in regulatory efforts around digital spaces, to address power asymmetries, societal and systemic risks. These include concerns around political interference, fundamental freedoms, information integrity, electoral and governance processes, market concentration and monetisation practices, as well as the cross-border circulation and appropriation of cultural content. The goal from the very start, should be design oversight that is proactive, proportionate, human rights-respecting and adaptable to rapid technological and behavioural change.

Designing with empathy, as was echoed during the Research Sprint, requires proactive, structured feedback loops with virtual communities. Often overlooked but significant is the back end where fair, dignified working conditions for content moderators and data labellers whose labour underpins these systems, majority of these ‘digital sweatshops’ being in Global Majority countries; should be upheld. Often, the harrowing work experiences of these ‘invisible labourers' are reflected in the lack of “prosocial design”; systems that reinforce community wellbeing, build public trust, and promote safety.

On digital platforms, communities such as Facebook groups, Discord servers and subreddits play a central role in shaping what safety looks like in practice. They co-create codes of conduct, shared norms, and informal rules that reflect their specific values, priorities, and vulnerabilities, while meeting members’ needs for connection and exchange. Volunteer moderators often act as the first line of defence, identifying harmful or inflammatory content, yet there is still no consistent, shared definition of “safety,” particularly as dynamics such as shadow banning, weak systems of redress, and evolving forms of rage bait complicate users’ ability to exercise discernment. Communities also navigate divergent uses of digital spaces, raising questions of mutual respect, and the challenge of participating authentically without self-censorship, as formal platform rules struggle to keep pace with changing human behaviour and rapid technological development. This raises broader design and policy concerns around adaptive governance, meaningful human oversight in automated systems, and fair, dignified support for moderators and data labellers who sustain safety behind the screens.

Following the weekly anchoring sessions and research with mentors, the sprint participants developed a practical toolkit. Designed to complement ongoing research and policy conversations, it highlights key considerations for creating more inclusive online environments and ultimately digital safe spaces.

The Toolkit

This Toolkit is a practical guide and a call to build digital safe spaces, envisioning platform safety as something grounded in universal human values to co-create policy frameworks that must actively incorporate plurality at every stage from ideation, design, development, deployment, and use of digital spaces. Inclusive participation and deliberation are essential for protecting fundamental rights, enhancing trust and safety; and building systems that serve all users of digital spaces.

Insights from our Global Research Sprint draw directly from the lived experiences of participants and survey responses representing a wide range of languages, neurodivergence, races, geographies, and sexual identities. To ensure these perspectives can inform action across regions, the toolkit has been translated from English to Russian, Hindi, German, Spanish and Portuguese languages to support inclusive dialogue and policy engagement.

I would like to conclude this blog with sentiments I strongly co-sign, from Faculty Co-Director of the Institute for Rebooting Social Media, Professor James Mickens, relaying his thoughts about how independent research into social media systems can be better supported and protected, especially in light of this increasing platform opacity… “Community building is actually going to be quite critical as we move into this next phase of technology governance because … there's increasing centralization of the Internet. I think the only way to counterbalance that is to create other power centers, other collections of people who have power through their collective strength. “

As conversations around digital safe spaces grow more complex, collaborative problem-solving, cross-pollination of ideas, continual re-imagining as technologies and societies evolve, may offer a clear(er) path forward. By bringing policymakers, researchers, technologists, and communities into shared discussions, we can shape digital safe spaces that are inclusive, resilient, and responsive to the needs of a global public.

This Toolkit was created to guide deeper, community-centered thinking on digital safe spaces. It is designed to help platform users, technologists, policymakers and other stakeholders understand how safety can and should be co-created by the people who inhabit digital spaces.

.png)

.jpg)